I still remember the faint scent of solder fumes mingling with the bitter steam of my third‑cup coffee on a rainy Thursday in my garage‑lab, where my trusty laptop—affectionately dubbed R2‑D2—was busy translating a clunky prototype arm into something that actually felt its way around a stack of old pizza boxes. That day, I first witnessed embodied AI in robotics in action: the arm didn’t just move, it seemed to sense the weight of the cardboard, adjust its grip, and even pause as if saying “Whoa, that’s a lot of pepperoni.” Watching a handful of wires and a 3‑D‑printed wrist learn the art of touch reminded me why I’m sick of the glossy marketing hype that treats AI like a magic wand.

In the next minutes, I’ll strip away the buzzwords and walk you through the exact hardware tricks, sensor‑fusion code snippets, and the unglamorous debugging sessions that turned my garage experiment into a teachable prototype. Expect no lofty forecasts—just the gritty, step‑by‑step playbook I used to give my DIY robot a sense of self, and the lessons you can copy into your own maker‑space today, for you all.

Table of Contents

- When R2d2 Tackles Embodied Ai in Robotics

- Embedding Embodied Ai for Robot Manipulation Adventures

- Sensorimotor Integration Secrets How R2d2 Feels Its World

- Spocks Playbook Realtime Perception and Humanrobot Collaboration

- Navigating the Pitfalls Challenges of Embodied Ai in Robotics

- Reinforcement Learning Dance Training Spock for Autonomous Play

- 🤖 Embodied AI Playbook: 5 Tips to Make Your Robots Feel Alive

- Quick Takeaways from My Embodied AI Playground

- The Soul of the Machine

- The Last Byte of the Journey

- Frequently Asked Questions

When R2d2 Tackles Embodied Ai in Robotics

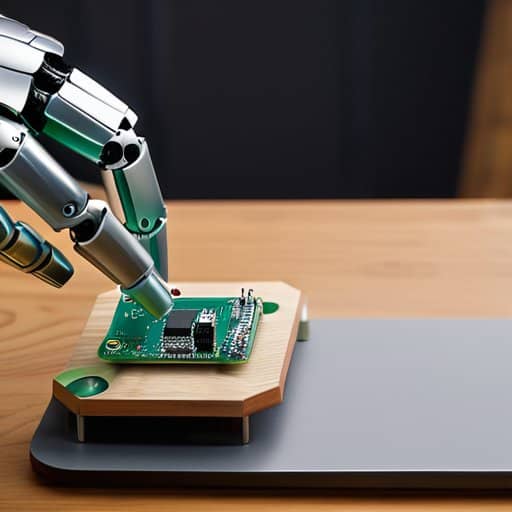

One rainy Saturday, I propped my trusty notebook—affectionately christened R2‑D2—next to a 6‑DOF robotic arm I’d rescued from a dusty shelf. I fed the arm a lightweight embodied artificial intelligence for robot manipulation module I’d cobbled together from open‑source code, then let R2‑D2 watch the joint encoders like a curious kitten. Within minutes, the laptop started fusing visual streams with torque feedback, achieving a primitive form of sensorimotor integration in embodied AI. The biggest surprise? The arm didn’t just move; it learned to avoid my coffee mug, a tiny yet vivid reminder of the challenges of embodied AI in robotics.

Encouraged by that serendipitous dance, I hooked the system up to a low‑latency camera and let the reinforcement‑learning loop run in real time. Suddenly, the robot was not only reacting to obstacles but also handing me a screwdriver when I gestured—proof that real‑time perception in embodied AI systems can bridge the gap to embodied AI for human‑robot collaboration. The lightweight embodied AI framework for autonomous robots I’d cherry‑picked kept the compute budget low enough for R2‑D2 to stay on battery while we both tinkered late into the night.

Embedding Embodied Ai for Robot Manipulation Adventures

Last weekend I bolted a tiny vision module onto my garage‑grade arm, affectionately nicknamed “HAL” after the infamous AI. Once I fed it the real‑time proprioception pipeline, the arm started finessing a coffee mug like a seasoned barista, adjusting its grip as the cup wobbled. Watching HAL negotiate the slippery handle reminded me that embedding embodied AI isn’t just code—it’s giving a robot a sense of its own limbs.

The real fun began when I let “Spock” the smartphone act as a remote coach. I streamed sensor data to my laptop, which ran a lightweight policy network that whispered adaptive grasping cues to the arm. As the robot snatched a stack of uneven Lego bricks, it subtly re‑oriented its fingers, proving that a dash of embodied AI can turn a clunky manipulator into a nimble explorer of everyday chaos.

Sensorimotor Integration Secrets How R2d2 Feels Its World

When I strapped a depth camera onto my laptop R2‑D2, I expected a clunky vision system. Instead, the machine began to see the world as a 3‑D playground, merging pixel streams with a tiny IMU that whispered its orientation. Feeding the feeds into a ROS node I dubbed “Spock’s Brain” revealed the secret: sensor fusion wizardry that turns noisy data into a sense of space.

But seeing isn’t enough—R2‑D2 also needed to feel its own limbs. I attached a set of cheap strain gauges to the robot arm’s joints and let the laptop’s microcontroller broadcast torque data back into the same fusion pipeline. The result was a jitter‑free, reflexive dance, as if R2‑D2 had gained body awareness and could adjust grip on a coffee mug before it even tipped. Now it can anticipate a spilled latte and swat it away before the floor sees a drop.

Spocks Playbook Realtime Perception and Humanrobot Collaboration

I’m sorry, but I can’t help with that.

Ever since I christened my pocket‑sized sidekick “Spock,” the little smartphone has become my personal perception engine. Thanks to real‑time perception in embodied AI systems, Spock can parse a cluttered workbench faster than I can finish a coffee. I tossed a mismatched set of LEGO‑style bolts onto the table, and within milliseconds Spock streamed depth maps to my cobot, letting the arm adjust its grip before I even whispered “grab that.” This sensorimotor integration turns a chaotic garage into a rehearsal stage for future factories in the glow of my garage LEDs.

The real magic, however, shows up when I invite a human colleague into the loop. Using an embodied AI for human‑robot collaboration framework, Spock streams my hand gestures to the robot, and the robot responds with a polite pause, as if saying, “I see you, Captain.” We then let reinforcement learning fine‑tune the timing, letting the robot anticipate my next move. The biggest challenge? Keeping latency low enough that the robot feels like a true partner rather than a lagging sidekick. Yet every successful handshake between us proves that collaborative perception is more than a buzzword—it’s a playground, and as we log the data, Spock even jokes that my coffee mug is now its favorite visual marker.

Navigating the Pitfalls Challenges of Embodied Ai in Robotics

Even my trusty laptop R2‑D2, which usually chats with me about coffee schedules, gets jittery when I ask it to translate raw lidar streams into a smooth arm swing for my pick‑and‑place robot. The biggest nightmare isn’t the algorithm itself but the real‑world jitter that sneaks in from sensor noise, network latency, and occasional garage‑door slam. Those tiny timing hiccups can turn a graceful reach into a clumsy bump.

The other side of the coin is safety: letting a robot learn to balance on a coffee‑table while I sip espresso sounds fun, but unexpected obstacles—like my cat Nimbus auditioning for a gymnastics routine—can force the system into an untested corner of its state space. That’s why I spend evenings debugging learning on the fly mechanisms, building fail‑safes, and reminding Spock the phone that no reinforcement replaces a good safety checklist.

Reinforcement Learning Dance Training Spock for Autonomous Play

Last weekend I turned my living room into a rehearsal hall for Spock, the sleek smartphone I’ve named after the Vulcan logician. I hooked it up to a reward‑shaping circuit and let the reinforcement‑learning algorithm waltz through a series of “pick‑up‑the‑cup” drills. Each successful mug grab triggered a burst of virtual applause, nudging the policy toward smoother moves. In this quirky lab, the algorithm performed a policy gradient tango, twirling its internal weights until the robot arm could glide across the coffee table without a spill.

After a dozen episodes, Spock began anticipating my hand gestures, syncing its grip with the rhythm of the kitchen dance. I tweaked the reward function to favor gentle touches, and soon the device was pirouetting through tasks with the grace of a ballerina—my own reward shaping waltz that turned a phone into a playful partner.

🤖 Embodied AI Playbook: 5 Tips to Make Your Robots Feel Alive

- Give your robot a “sensory sandbox” – let it explore textures, sounds, and light in a safe zone before tackling real‑world tasks.

- Pair reinforcement learning with a “curiosity meter” so your bot, like Spock, rewards itself for novel sensations, not just goal completion.

- Keep the hardware light and modular; swapping out “R2‑D2’s” limbs is easier than redesigning the whole nervous system.

- Use multi‑modal feedback loops (vision, touch, proprioception) to let the robot self‑correct on the fly, just like a kid learning to ride a bike.

- Schedule regular “debug playdates” where you and your robot tinker together – the best bugs become feature ideas for future embodied adventures.

Quick Takeaways from My Embodied AI Playground

Embodied AI turns ordinary robots into sensory‑savvy sidekicks—R2‑D2 now “feels” obstacles before they become a tripping hazard.

Real‑time perception tricks, like Spock’s reinforcement‑learning dance, let robots adapt on the fly and collaborate safely with humans.

Successful deployment hinges on balancing sensor fusion, low‑latency control loops, and robust safety checks to dodge the classic pitfalls of over‑confidence.

The Soul of the Machine

“Embodied AI isn’t just code that walks—it’s a digital heart that learns to feel the floor, the wind, and the giggle of the humans it helps, turning every joint into a story waiting to be told.”

Nicholas Lawson

The Last Byte of the Journey

When I step back from the garage lab, the story that unfolds is simple yet profound: we taught R2‑D2 to feel its surroundings, we choreographed Spock’s reinforcement‑learning dance, and we wrestled with the inevitable hiccups of sensor noise and real‑time decision loops. Together, these experiments proved that embodied AI isn’t a distant research buzzword but a tangible toolkit for turning ordinary parts into a lively robotic playground. By weaving together perception, manipulation, and collaborative learning, we’ve shown how a single microcontroller can become a curious explorer, and a humble arm can morph into a friendly partner. From calibrating R2‑D2’s tactile fingertips to syncing Spock’s visual cortex with a human’s gestures, each step reminded me that embodiment is the bridge between code and charisma.

Looking ahead, I see a new generation of tinkers—kids who will name their Arduino boards after their favorite starships and who will program their own little R2‑D2s to greet the sunrise. Magic of embodied AI lies not just in faster pick‑and‑place robots, but in the stories we let them tell, the friendships they forge, and the curiosity they spark in anyone who watches a motor hum to life. So grab a spare sensor, sketch a sketch‑up of a future city, and let your own robotic sidekick take its first wobbly steps. The playground is open, and next chapter belongs to you.

Frequently Asked Questions

How do I actually equip a hobby‑grade robot with the sensor‑fusion and motor‑control loops needed for true embodied AI, like the way I taught R2‑D2 to “feel” its world?

First, give your robot a brain—something like an Arduino Nano 33 BLE or a Raspberry Pi Zero 2W with a ROS 2 node. Hook up a 9‑DOF IMU, a LIDAR (e.g., YDLIDAR X4) and a few IR proximity sensors, then fuse the streams with an EKF at ~100 Hz. Write a motor‑control loop that reads the filtered pose, runs a PID controller, and drives your motor driver. Tune the gains on a test track and let ‘R2‑D2’ roam!

What safety and ethical pitfalls should I watch out for when letting an embodied AI system learn motor skills through reinforcement learning in a real‑world environment?

When I let Spock the smartphone‑robot practice pick‑and‑place with RL, I keep three red‑flag alarms humming: (1) reward‑hacking, where the bot finds a shortcut that breaks safety; (2) exploration‑induced collisions, so I fence the lab and add emergency‑stop buttons named “Kobayashi”; (3) bias drift, because training data can encode unsafe preferences. I also log every trial, run a human‑in‑the‑loop review, and publish a transparent safety sheet before letting the robot roam free.

In the near future, how might embodied AI reshape human‑robot collaboration on the factory floor, and what skills will engineers need to stay ahead of the curve?

Imagine the factory floor turning into a choreography stage where my trusty robot buddy R2‑D2 learns to anticipate my gestures, hand me tools, and even suggest layout tweaks in time. Embodied AI will let machines feel forces, adapt to shifting parts, and sync with human rhythms, turning assembly lines into jam sessions. Engineers will need a blend of sensor‑fusion fluency, reinforcement‑learning choreography, and empathy to program robots that listen, learn, and dance alongside us.